|

4G/LTE - Test |

||

|

Test and Verification

Since I have been in the area of Test/Verification for the longest time of my career, there might be the largest amount of things to say in this area. However, there has been the least amount of the contents in this area in ShareTechnote. I have long been planning to start posting on this area but I didn't have chance to trigger yet. Sometimes, I found it difficult to say even a single word when there are too many things to say :)

Recently I got suggested/pushed to say something on this :), I thought this is a good chance to start talking on this. I am not sure how long (months ? years ?) to complete posting a critical mass of the contents, but I am starting anyway.

Basic Terminologies

As in any area of the technology, Test/Verification is also a technology with wide spectrum and with a long history. In any of those technologies, you would see a lot of terminologies which in many cases does not sound clear and confusing to you. Following is the list of the terminology at the highest level (not even getting into any details) in random order. Do all of these sound clear to you ? or still confused ? Don't get disappointed too much even if you are still confused.. I am still confused as well :). In many cases, these terminlogies are not used in clear manner and in many cases they use the same words for different things and some other times they use different words for the same thing. It happens in any language and any dialog. So my recommendation is 'Don't focus on the definition of these terminologies. Just focus on the details of what you have to do in your testing/verification task'. Any test/verification document with quality are very clear on exact procedure/detailed step-by-step procedure for each of the test. (I will creat another document describing on which one is a good test document and which one is bad document later when I have chance... too many things to talk on this :))

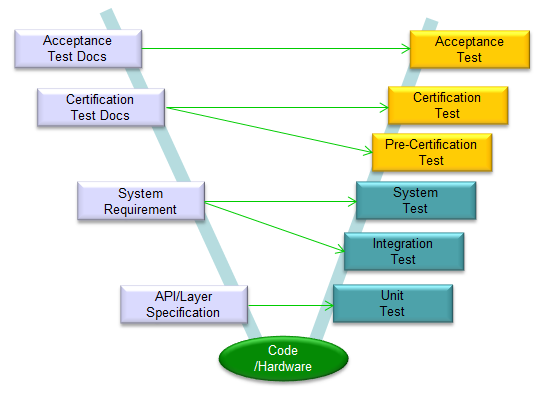

V Model for Testing Cellular Technology

If you got trained formally in Testing area or looked into formal textbook, you might have seen the concept of 'V' model. 'V' model is a kind of representation technique to align planning (defining the specification) process on the left and testing process on the right in the form of the letter 'V'. The steps in the order of time (chronoligical order) is from the top left of the V and slide down to the bottom and then proceed upwards to the top right of the V. Each of the blocks on the path would vary case by case. If you google 'V model' image, you would see a lot of example and you would see none of the examples are exactly the same. Following is my version of V Model in Cellular technology. You may or may not agree with the details.. you may have your own version. Whatever it is, it would be good to draw this kind of model to have a clear big picture of the process of your own.

In case of Cellular technology, workload on the left side would be relatively small since most of the job have been done by international standard organization like 3GPP. Probably the specification right on top of Code/Hardware implementation (API or Unit specification) would only be your own.

In this page, I would mainly talk about the right low part of the V model (Unit Test, Integration Test, System Test). I would not touch much on the right top part of the V model either since it is usually defined by 3GPP and implemented by test equipment vendor. There are not much room for you to put much of your own idea on those steps.

Unit to System

In testing procedures (right parts of V model) in cellular technology, you don't have to worry much about the parts marked in orange (Pre-Certification and above) in terms of test strategy/methodology because the detailed test procedure/methodology is already established by somebody else (mostly international standard organization like 3GPP), but you you may need to establish your own strategy/methodology for the tests at the lower half (e.g, Unit Test, Integration Test, System Test) if you are involved in the test at early stage of development cycle.

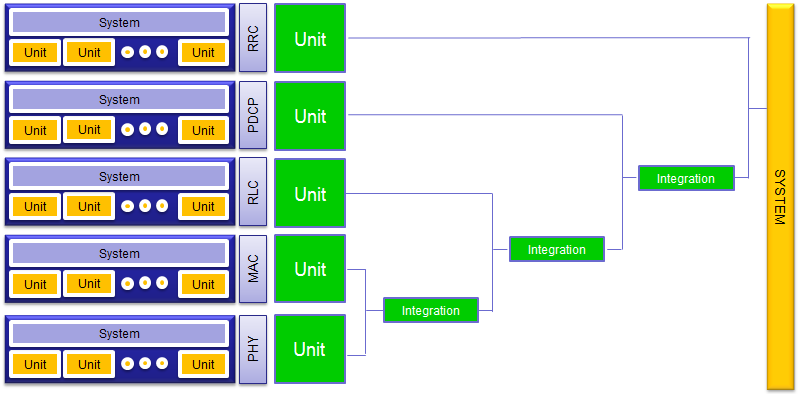

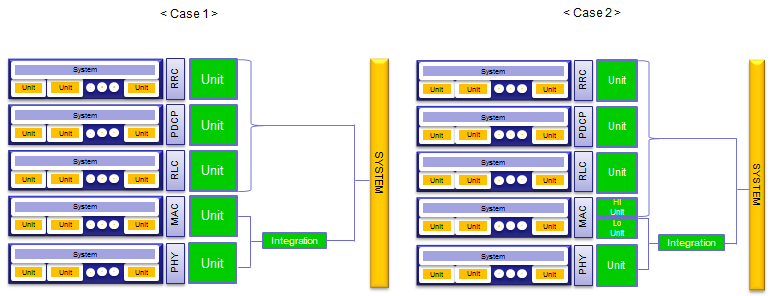

Practically and logically, you can illustrate overall development process and testing as shown below. If your company is working on celluar modem chipset (wether for modem for mobile phone or modem for eNB), you might have independent development team for each layer. And there would be another team that is in charge of integrating all of the layers into a whole system.

In terms of testing, the first thing you have to figure out (or define) is to define what is the fundamental unit (the smallest component to be tested) and what is your system. This definition is very important. However, the definition would be different depending on which specific area (scope) your testing would work.

For example, if you are working at PHY development team and setup a test procedure of PHY layer only, Your test Unit would be various basic functions (e.g, API or FPGA blocks performing each steps in various channel coding process) and your test System would be the whole integrated PHY layer. I will describe more on this in following sections. If you are working at inegration team of the full protocol stack, your test Unit can be the whole PHY or whole MAC etc and your System would be the whole modem with the full stack.

What is Unit Test ?

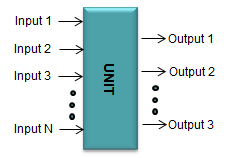

As I mentioned above, the definition of Unit depends the scope of overal testing that you are working on. However, the whatever your scope is, it would be the most important to clearly define each and every possible Units. One of the most common example of test Unit is a API function (if the test system is a software) or the minimum hardware component to which you can connect measurement probes and measures various quantities (e.g, current, voltage or digital pulses).

Regardless of whether it is hardware or software, a unit has well defined input and output as illustrated below. The functionality of the unit is to covert the given inputs into the expected outputs.

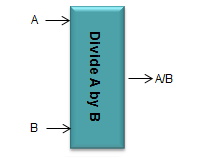

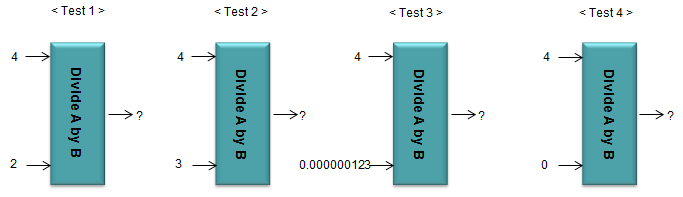

you need to be able to clearly define what are the inputs and what are the outputs of the unit. If you are working on a software project like developing a spreadsheet(e.g, Excel). Each of the mathematical function can be a test Unit. For example, if you have a function named 'Div(a,b)'. The unit can be illustrated as shown below.

Once you defined a unit as shown above, the example of Unit test can be as follows. In most case, it is impossible to perform the unit test for all the possible combinations of input and output. So the job of the experts in test/verification team is to figure out the best input/output combination sets that can verify the unit in minimum effort (minimum try). In many cases, we test with some of the typical cases and some of corner cases. 'Corner case' refer to the case that may not happen often but it is highly likely to create the problem if it happens. In case of our div(a,b) example, Test 1,2 may belong to the typical cases and Test 3,4 may belong to Corner cases. Usually it would be relatively easy to define typical cases, but it tend to be difficult to define Corner cases.

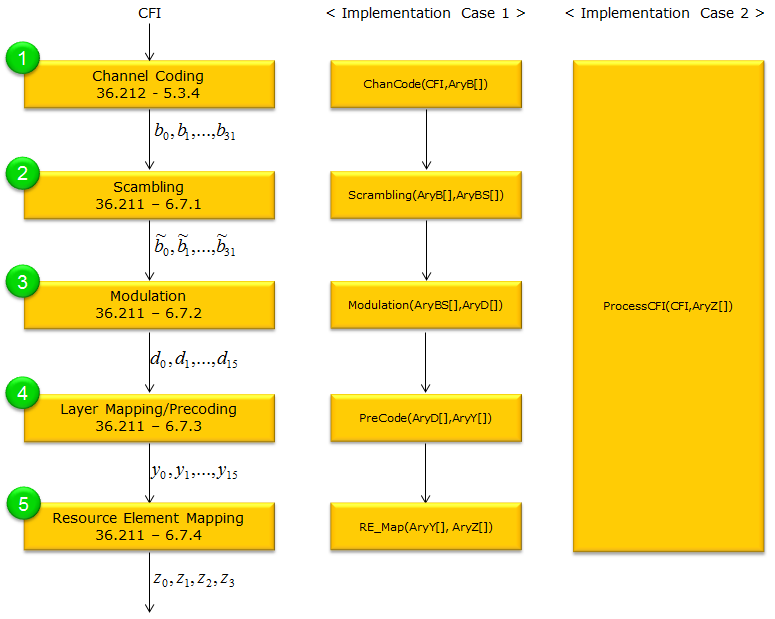

Now let's look into a more practical cases in wireless communication. Following shows the CFI channel process (I picked this as an example since this is much more simpler comparing other channels like PDSCH, PUSCH). The left most track shows the CFI coding process depicted in 3GPP specification. How do you implement this specification in your protocol stack. Of course, there can be almost infinite number of different ways to implement this. I put two typical examples of implementations : Case 1 and Case 2. Logically and personally I prefer Case 1, but depending on your hardware architecture you may need to implement it like Case 2 (As you might see, you might need a lot of memory copies or data transfer between each function in Case 1 unless you come out with special techniques to reduce this kind of data transfer process).

How can we define the 'Unit' in your test plan ? The answer would be different depending on how you implemnted this process. If you implemented this process as in Case 1, your 'Unit' in the test procedure would be ChanCode(), Scrambling(), Modulation(), PreCode(), RE_Map(). If you implemented this process as in Case 2, your 'Unit' is only one, i.e, ProcessCFI().

Personally I think Unit definition and Unit testing is the most important step, but in reality I think this is frequenly the least performing steps in many project (especially in many failing project I have observed).

Why do I think the Unit test is the most important step ? There are couple of reasons as follows. i) Technically/Theoretically, you need to prove that your unit works as expected at least for a couple of typical inputs. ii) Practically, well defined unit test will be the best tools for troubleshoot at later step (i.e, in integration test or in system test) I want to put some more comments on item ii). As far as I have experienced, I am almost 100% sure that you will come across a situation where you have some problems with the system and the trouble should be investigated in a very low level. Usually the problem caused by the low layer issues is very hard to troubleshoot. Sometimes some simple trace logging is not easy because it may cause another performance issue if you enable all the loggings at the very lower layer. However, if you have well-defined sets of Unit test, you may be point out at least an handful of units that MAY be the root cause of the problem and you can just run those unit test to duplicate the problem. Once you are able to duplicate the problem, fixing the problem is relatively easy. In many cases (especially in many failing project), I have seen the cases where there is not much of the well-defined Unit test. In later steps, some problem happens and even a single problem may delay the whole project in days (if you are lucky) and in weeks or even in month (if you are not lucky).

Then your next question would be 'why they fail to define/implement a good unit test cases in some project ?'. Based on my experience/observation, it is more on human factor and management issue rather than pure technical issues. Followings are my observation. i) most of the unit test (unit definition as well) requires very detailed domain knowledge which is almost same level as developers. It is hard to find verification engineers with such level of knowledge (it may be almost impossible). ii) So, in many cases Unit Test (Unit definition) are supposed to be done by developers, but developers does not have enough time to do this while they are busy with the development. Also, not so many developers like this kind of documentation and test case creation job. iii) Management does not realize that this is an important step. So they does not allocate enough human resource and time for this step and just expect the whole bunch of system level test cases to be passed. In many case, management person tend to focus only on the number of test title in the spreadsheet and doesn't care much on how to achieve the testing goal.

I think the critical factors for the success of Unit test stage is the verification engineer with the detailed domain knowledge that is at least good enough to understand what the R&D engineer is talking about and understand the industry specification document in depth.

Integration Test/System Test/Function Test

Literally speaking, you can say 'Integration Test' is the test at each integration step (mostly before all the units are combined to a complete system) and 'System' test is the test after all the units are assembled(Integrated) into a complete system. But in reality, the boundary between Integration test and System Test may not be as clear as it sound.

In theory, integration of a system would goes with adding one unit (or one layer) at a time as illustrated as below.

But in reality, this kind of "one layer at a time" integration might not be easy for various reason. According to my experience with supporting many project, it seems that the integration goes more like as shown below. Usually PHY and MAC integration goes one by one, but once PHY/MAC get integrated all the remaining stack gets integrated at once. In this case, it is hard to define clear distinction between integration and system test. After PHY/MAC integration, you may say Integration test and System Test would be almost same.

It is also hard to draw clear boarder line between System Test and Function Test because in most case Function test is done after full system integration and done at the system level (not at Unit level).

In practice, when you call 'Function test', it usually defines several very rough functional items without defining detailed intermediate process. For example, if you are testing on Mobile phone and is doing a 'Browsing' test as a functional test level, the test case would go as follows. i) Turn on DUT and camp on to Test Equipment or Live Network ii) Open a browser in DUT (Mobile phone) iii) type in www.google.com at URI input iv) check if UE displays the contents of www.google.com As you see here, the procedure describes each step at very high level (almost only at User interface level) and rarely defines any detailed procedure (e.g, detailed radio protocol and IP layer protocol). So even when a multiple DUTs passed the same function test, the detailed procedure (e.g, detailed protocol sequence) may not be same. In addition, even though a DUT has passed this function test, you cannot guarantee that it went through the specific protocol sequence as you might expected.

If you have very well implemented system and all the function test cases are passed without any failure, you are lucky. But if the system integration is not so perfect (as in most case), you would see issues (failure) during the test. It tend to be very difficult to troubleshoot based on function test case document. For any kind of troubleshoot, you need to have very clear specification for expected behavior and internal sequence (e.g, detailed protocol sequence). Otherwise, you don't even know what to fix. So if you have only a function test document and does not have detailed knowledge of internal process, troubleshooting is extremly difficult. Unfortunately, I come across this kind of situation too often while I am working on many project.

|

||