|

Data Throughput |

||

|

LTE - Low Throughput Application

As far as I remember, most part of evolution in cellular communication so far has been focused mainly on increasing the throughput. And this trend would not change much in future generation (e.g, 5G). Then, why we care about 'Low Throughput' issues ? As of now (Jan 2015), it is true to say that most of the demand for celluar communication (especially LTE) lies on high throughput application, but as we start including in application of celluar communication such applications that were not considered as cellular communication we need to think about providing optimal conditions for those applications. One of the most common example of these applications is M2M (Machine to Machine) communication or Sensor network etc. In order to meet this kind of specific demand, 3GPP defined a new Category labled as 'Category 0' with maximum throughput of 1 Mbps in Release 12. Even before adopting this specific Category, there has been vendors who has already been manufacturing various devices which are targeted for such a low throughput applications.

Is there any technical issues to achieve the low throughput ?

Now you may say.. "OK, now I understand the demand for low throughput application.. but is there any specific reason why we need to worry about such low throughput application ?. We already have developed device working fine with such a super high throughput. Wouldn't it be possible to simply downgrade the high throughput device into low throughput performance ? or Just let network to allocate small number of Resource blocks ?" Theoretically it can be true, but as you know life is not that easy in any type of engineering. Usually, when you redirect the application of a technology to another direction, the constraints about the technology/application also tend to change. Then what kind of constraint changes we need to think of the low throughput technology ? Some of these constraints that pops up in my mind now are as follows :

Considering these factors, we may need to go through consideral amount of redesign (in case of device side) and optimization (in case of network side). In extrem case, it may not be possible to fully optimize the existing technology for such a application with extremly low throughput and bursty communication. For this kind of application, completely different technology like LTN (Low Throughput Network) is being researched now. However, LTN is not in the scope of this page. (Refer to LTN section under "IoT" menu if you want to get further details). In this page, I will focus on low throuhgput application of LTE.

Factors influencing Low Throughput Application

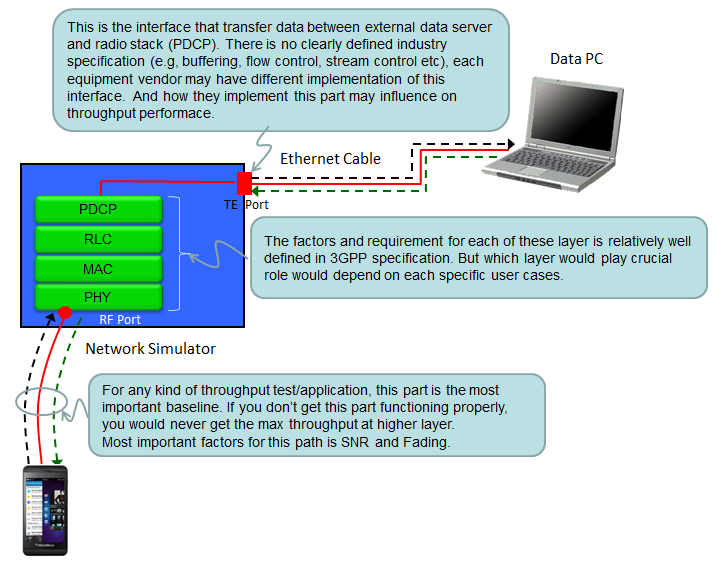

Following illustration shows overall structure of the throughput test enviornement. Each and every components shown in the illustration would influence on the throughput performace. So to toptimize the throughput, you need to know all the detailed information on the parameters for each components on the data path and you need to have full control over those parameters, but there are a certain type of information that is hard to be obtained. As I commented in the illustraton, it would be hard to get the detailed information about the network interface implementation even though it would influence a lot of the performance in certain case.

According to my experience, it would be relatively easy to achieve the optimum throughput for medium range of throughput (e.g, Cat 3 or Cat 4 max throughput), but it is not easy to achieve the extremley high throughput (e.g, Cat 6 or Cat 9 as of 2015 Jan timeframe) or very low throughput based on very small packet size. Both in extremly high throughput and low throughput of small packet size, the bottle neck seems to be at PDCP and above including packet interface (TE port).

In case of extremly high throughput case, the bottleneck tend to be lie in how fast the data server can generate the packet, how fast the packet interface can get those trasnsaction go through and how much internal buffer the test system has and how well the flow control between the components on the data path are implemented.

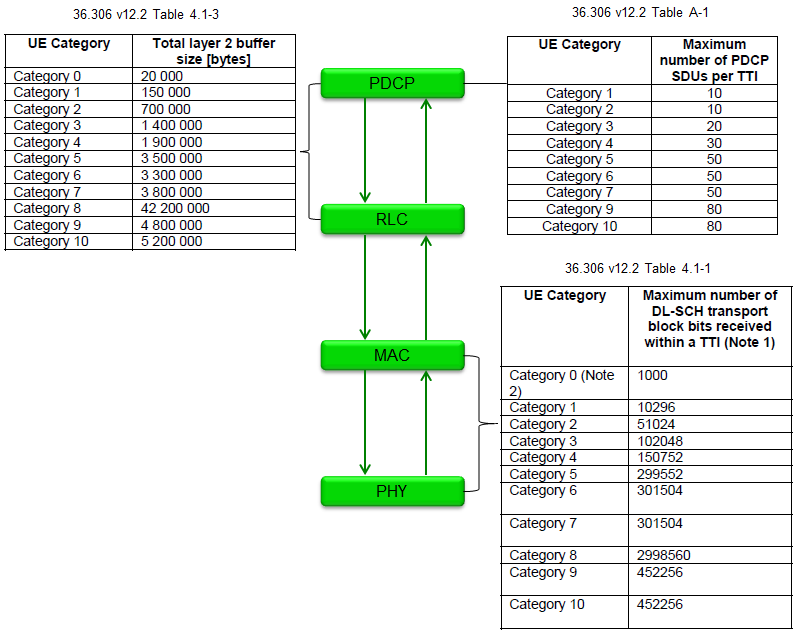

In case of low throughput with small packet size, the bottleneck tend to be how many packets can pass through each of the components on the data path. Some layers only matters with size of the packet and doesn't matter much with the number of the packet, but some layer doesn't matter much with size of the packet, but matter with the number of the packet. In most of the test equipment (as far as I know), it seems that the number matters at high layer (e.g, RLC, PDCP) and the size matters at low layer (MAC/PHY). By the specification, there is almost no limit in terms of packet size in RLC/PDCP packet but PHY/MAC always has predefined size limit. (However, in real equipment/even in live network, there should be a certain degree of size restrictions even in RLC/PDCP due to the limited buffer size, but what I am trying to say is .. in general RLC/PDCP is less restricted by size comparing to lower layer).

Is there any 3GPP specification clearly specifying all the parameters for each components on data path ? Not all of them in detail, but you can find some overall guide lines from 36.306 as summarized below.

|

||