|

AI/ML

Finally AI/ML is getting into 3GPP -:). This note is not about what is AI/ML. I have a whole list of section about AI/ML itself and 3GPP also posted a short article about AI/ML. This note is more of how and where AI/ML will be used in 3GPP framework.

In terms of 3GPP specification, the integration artificial intelligence (AI) has been introduced for many years and have been evolved to enhance cellular network functionality even though the real implementation and deployment is there yet. The diagram shown below provides a timeline showcasing the integration and evolution of artificial intelligence (AI) within 3GPP releases.

Image Source : Artificial Intelligence in Cellular Networks

- 3GPP Rel 15 (Pre-2018)

- AI was introduced with NWDAF for predictive maintenance of core network elements to enhance reliability, reduce downtime, and prevent potential performance degradation. MDA supported predictive maintenance to mitigate potential failures.

- 3GPP Rel 16 (2020)

- Traffic Forecasting: AI-driven solutions were implemented to forecast traffic, helping manage network load and improving user experiences.

- End-to-End Service Management: AI-enabled solutions focused on real-time performance monitoring and automated troubleshooting for seamless service management.

- 3GPP Rel 17 (2022)

- Enhanced NWDAF: Expanded functionalities included support for horizontal federated learning and complex AI/ML use cases such as predictive maintenance, QoS management, and threat detection.

- AI/ML Support for MDA: Provisions for AI/ML model training and deployment were defined under TS 28.105.

- AI Applications: AI was applied in energy savings, load balancing, and mobility optimization to improve overall network efficiency.

- 3GPP Rel 18 (2024)

- AI-Based NR Air Interface: Focused on incorporating AI in beam management, positioning, CSI prediction, and feedback mechanisms to optimize network performance.

- Cognitive Network Management: Introduced systems that adapt to real-time network conditions and support closed-loop automation.

- 3GPP Rel 19 (2025+)

- Advanced NWDAF Integration: Plans for vertical federated learning to enhance AI techniques in NWDAF for advanced analytics.

- Native AI in RAN: Integration of native AI capabilities within the Radio Access Network for deeper optimization and innovation.

The integration of Artificial Intelligence (AI) and Machine Learning (ML) into 5G networks is a key focus of 3GPP’s 5G-Advanced framework, encompassing the Radio Access Network (RAN), Core Network (5GC), and network management and orchestration (OAM). AI/ML is being systematically studied and implemented across multiple domains to enhance network efficiency, automation, and intelligent decision-making.

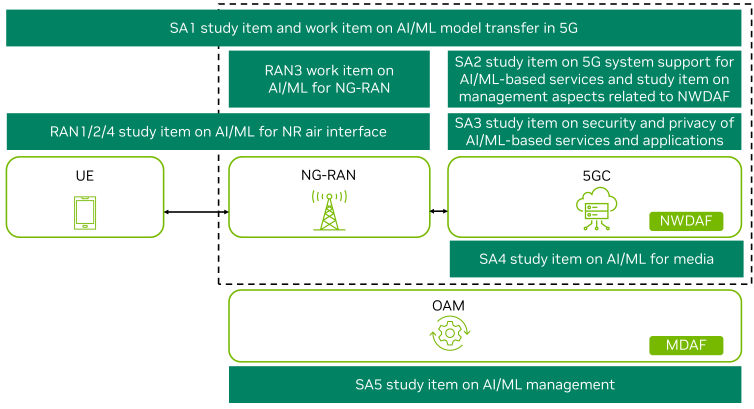

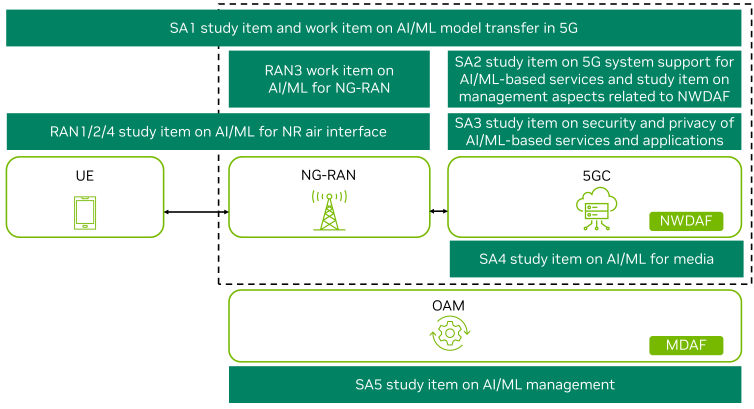

The diagram below provides an overview of AI/ML study and work items in 3GPP's 5G-Advanced framework, which aligns with the AI initiative

Image Source : Artificial Intelligence in 3GPP 5G-Advanced: A Survey

As illustrated in the diagram, the major components of AI integration across the entire network can be splitted as listed below.

- RAN (Radio Access Network) – AI/ML enhances the air interface, radio resource management, beamforming, and self-organizing networks.

- Core Network (5GC) – AI-driven analytics (NWDAF) optimize traffic prediction, anomaly detection, and intelligent network slicing.

- Network Management (OAM) – AI-powered automation (MDAF) improves fault detection, predictive maintenance, and performance optimization.

- Security, Privacy, and Service Support – AI/ML ensures secure model training, protects user data, and addresses adversarial threats in 5G services.

AI/ML in NG-RAN (Next Generation RAN)

AI and Machine Learning (ML) are playing a crucial role in enhancing the Next Generation Radio Access Network (NG-RAN) by improving efficiency, adaptability, and performance. Within 3GPP, multiple study and work items are dedicated to integrating AI/ML into different aspects of NG-RAN. The RAN1/2/4 study item focuses on AI/ML-based enhancements at the physical and MAC layers, including channel state information (CSI) prediction, beamforming optimization, and interference management, all of

which contribute to more efficient spectrum usage and improved connectivity. Meanwhile, the RAN3 work item explores AI/ML applications for network control and self-optimization, particularly in self-organizing networks (SON) and load balancing, allowing for dynamic resource allocation and enhanced user experience. These AI-driven innovations aim to make NG-RAN more intelligent, autonomous, and capable of handling complex network conditions in 5G-Advanced and beyond.

- RAN1/2/4 Study Item on AI/ML for NR Air Interface: Focuses on AI/ML-based enhancements to the physical and MAC layers, such as channel state information (CSI) prediction, beamforming optimization, and interference management.

- RAN3 Work Item on AI/ML for NG-RAN: Concentrates on AI/ML applications for network control and self-optimization, including self-organizing networks (SON) and load balancing.

AI/ML in the 5G Core (5GC)

AI and Machine Learning (ML) are transforming the 5G Core (5GC) by enabling smarter network operations, traffic management, and media optimization. Within 3GPP, the SA2 study item focuses on system-level AI/ML integration, particularly through the Network Data Analytics Function (NWDAF), which enhances intelligent traffic management, anomaly detection, and network optimization. By leveraging AI-driven insights, NWDAF enables real-time decision-making for improved performance and resource utilization.

Additionally, the SA4 study item explores AI applications in media, focusing on real-time multimedia processing and video optimization to enhance user experience and network efficiency. These AI-driven advancements in the core network contribute to a more intelligent, adaptive, and efficient 5G system, paving the way for AI-native 6G networks.

- SA2 Study Item: Covers system-level AI/ML integration, particularly in Network Data Analytics Function (NWDAF), enabling intelligent traffic management and anomaly detection.

- SA4 Study Item on AI/ML for Media: Focuses on AI-driven enhancements in real-time multimedia processing and video optimization.

AI/ML in Network Management & Orchestration (OAM)

AI and Machine Learning (ML) are revolutionizing network management and orchestration (OAM) in 5G by enabling intelligent automation, predictive maintenance, and real-time analytics. The SA5 study item focuses on AI/ML-driven network management, covering key areas such as fault detection, performance optimization, and self-healing mechanisms to enhance operational efficiency. Additionally, the Management Data Analytics Function (MDAF) plays a crucial role in providing real-time insights and

automation at the OAM level, ensuring proactive issue resolution and dynamic resource allocation. By integrating AI/ML into network management, operators can achieve greater efficiency, reduced downtime, and enhanced service reliability, ultimately leading to a more autonomous and intelligent 5G network.

- SA5 Study Item on AI/ML Management: Encompasses AI-driven fault detection, predictive maintenance, and intelligent automation in network operations.

- Management Data Analytics Function (MDAF): Supports real-time analytics and automation at the OAM level.

Security and Privacy Aspects of AI/ML

As AI and Machine Learning (ML) become integral to 5G networks, ensuring security and privacy in AI-powered services is a critical challenge. The SA3 study item focuses on protecting AI/ML-based applications by addressing key concerns such as secure AI model training, data privacy, and adversarial robustness. AI models in 5G must be safeguarded against threats like data poisoning, model inversion attacks, and unauthorized access to sensitive information. By implementing robust security frameworks

and privacy-preserving techniques, 3GPP aims to create a trustworthy AI-driven 5G ecosystem that ensures reliable and secure operations across RAN, Core, and network management functions.

- SA3 Study Item on Security and Privacy of AI/ML-based Services and Applications: Ensures secure AI model training, data privacy, and adversarial robustness in AI-powered 5G services.

AI/ML Model Transfer in 5G (SA1)

AI/ML model transfer is a crucial aspect of integrating intelligence across 5G networks, enabling seamless updates and optimization of AI-driven functionalities. The SA1 study item focuses on standardizing how AI models are transferred across different network functions, ensuring interoperability and efficiency in a multi-vendor 5G ecosystem. This includes developing frameworks for distributed learning, where AI models can be collaboratively trained and updated across various network components

without compromising security or performance. By enabling efficient AI model transfer, 5G networks can continuously evolve, leveraging real-time intelligence to enhance performance, adaptability, and automation across RAN, Core, and network management layers.

- Standardizing how AI models are transferred across network functions, ensuring efficient model updates and distributed learning in a multi-vendor 5G ecosystem.

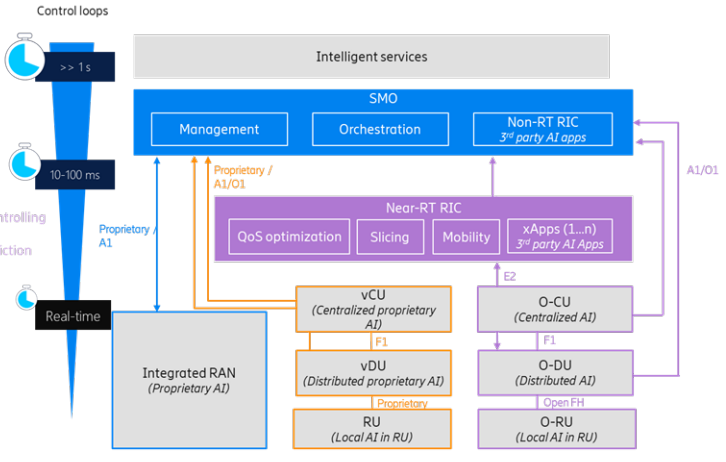

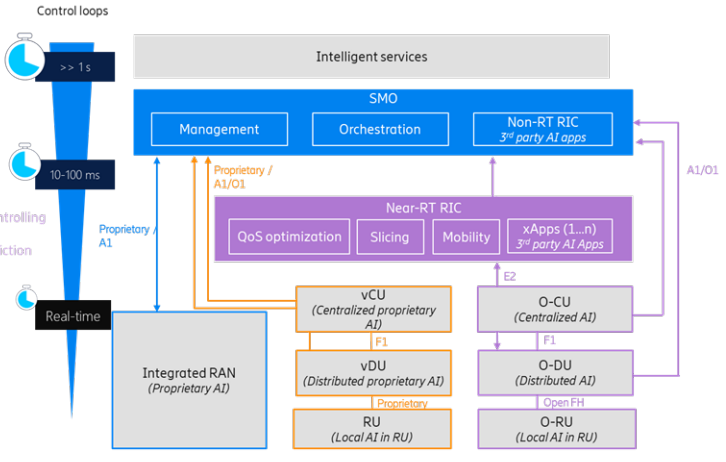

AI plays role in various different components and layers within the overall architecture of a RAN (Radio Access Network). The role is well summarized in a dagram as shown below. This diagram illustrates the interaction of AI workloads across different RAN architectures, emphasizing the integration of AI in enabling intelligent services and optimizing network performance. It showcases various AI workloads classified by their control loop timing: long timescales for tasks like prioritizing first

responders, medium timescales for admission control and bandwidth prediction, and real-time processes for beamforming and scheduling. The architecture involves elements like the Service Management and Orchestration (SMO) layer, non-real-time RIC for third-party AI applications, near-real-time RIC for QoS optimization and mobility, and the underlying RAN components (vCU, vDU, RU, O-CU, O-DU, and O-RU). These layers interact seamlessly using standardized interfaces (A1/O1 and E2), enabling a hierarchical and distributed

approach to AI-driven RAN operations. The integration of proprietary and third-party AI solutions ensures efficient, flexible, and adaptive control across network layers.

Image Source : Artificial Intelligence in Cellular Networks

Followings are the breakdown and description of the above diagram

- AI Workloads and Control Loops

- Long Timescale (>1s):

- First responder prioritization.

- Camera prioritization for live events.

- UE QoS assurance.

- Medium Timescale (10-100 ms):

- Admission control xApps for controlling RAN behavior.

- Service-based bandwidth prediction.

- Real-Time Control:

- Beamforming.

- Scheduling.

- Coordinated Multipoint (CoMP).

- Fast spectrum management.

- Service Management and Orchestration (SMO)

- Management and Orchestration: Centralized layers enabling intelligent service management.

- Non-RT RIC: Supports third-party AI applications (xApps) for tasks like QoS optimization, slicing, and mobility.

- Near-RT RIC

- Handles time-sensitive tasks with xApps for QoS, slicing, and mobility.

- Enables integration of third-party AI applications with proprietary AI.

- RAN Components and AI Distribution

- vCU: Centralized proprietary AI.

- vDU: Distributed proprietary AI.

- RU: Local AI in the RU.

- O-CU: Centralized AI with Open FH support.

- O-DU: Distributed AI with Open FH support.

- O-RU: Local AI in Open FH RU.

- Interfaces

- A1/O1: Interfaces connecting the SMO, non-RT RIC, and near-RT RIC for coordination between AI workloads.

- E2: Interface enabling interaction between RICs and RAN components.

- Integration of Proprietary and Third-Party AI

- Combines proprietary AI solutions with third-party AI xApps to achieve flexible, adaptive, and efficient RAN operations.

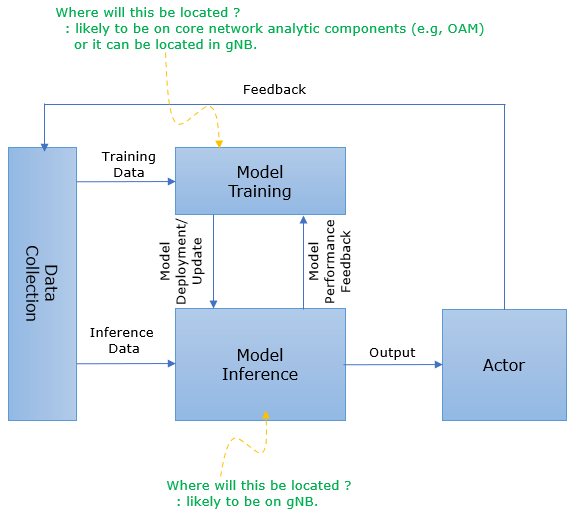

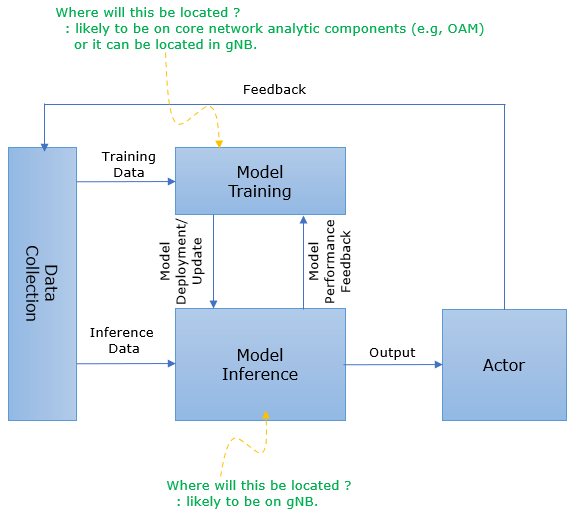

As of now (Dec 2021), it is not clearly decided exactly what kind of AI/ML framework will be used in terms of 5G/NR network. One possible high level view is as shown below (this is based on TR 38.817 V1.0.0 (2021-12) - Figure 4.2-1: Functional Framework for RAN Intelligence).

Who is doing the data preparation (data pre-processing and cleaning, formatting, and transformation) ?

: Data Collection component just collect the raw data and does not process it for other component to use it. The data processing / preparation is done by the component that comsumes the data. For example, if the collected data is for training model, Data Collection module just transfer the raw data to Model Training component and the Model Training component preprocess the data.

If if the collected data is for model inference, Data Collection module just transfer the raw data to Model Inference component and the Model Inference component prepare the data.

When I was thinking of applying AI/ML in cellular technoloty (especially on RAN side), I had so many questions poping up in my head. What kind of neural network model will be used ? How it will be trained ? Who (gNB or Corenetwork) will train the network ? What kind of data will be used for training the network ? What kind of use cases will there be ? and so on.. and on ... and on. As

of now (Dec 2021), I don't have clear answer to these questions... I would need to wait until the 3GPP specification of AI/ML is finalized (Rel 18). But at least I can get a glimpse of general principles of AI/ML implmentation from TR 38.817 - 4.1. It is stated as follows (Just reading these bullets gives me pretty good idea).

- The detailed AI/ML algorithms and models for use cases are implementation specific and out of RAN3 scope.

- The study focuses on AI/ML functionality and corresponding types of inputs/outputs.

- The input/output and the location of the Model Training and Model Inference function should be studied case by case.

- The study focuses on the analysis of data needed at the Model Training function from Data Collection, while the aspects of how the Model Training function uses inputs to train a model are out of RAN3 scope.

- The study focuses on the analysis of data needed at the Model Inference function from Data Collection, while the aspects of how the Model Inference function uses inputs to derive outputs are out of RAN3 scope.

- Where AI/ML functionality resides within the current RAN architecture, depends on deployment and on the specific use cases.

- The Model Training and Model Inference functions should be able to request, if needed, specific information to be used to train or execute the AI/ML algorithm and to avoid reception of unnecessary information. The nature of such information depends on the use case and on the AI/ML algorithm.

- The Model Inference function should signal the outputs of the model only to nodes that have explicitly requested them (e.g. via subscription), or nodes that are subject to actions based on the output from Model Inference.

- An AI/ML model used in a Model Inference function has to be initially trained, validated and tested before deployment.

- The generalized workflow should not prevent to “think beyond” the workflow if the use case requires so.

- User data privacy and anonymisation should be respected during AI/ML operation.

In short, these can be summarized as

- 3GPP would not define exact neural network definition / implementation. It would define only the interface and type of input/output of the neural network(Model).

- The whole AI/ML functionality would be comprised of several different compoments (e.g, Data Collection, Model Training, Model Interence, Actor). Where these components will be located will vary depending on specific use cases.

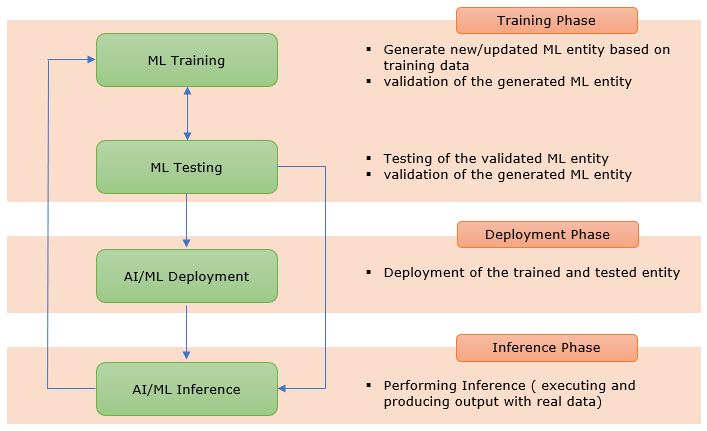

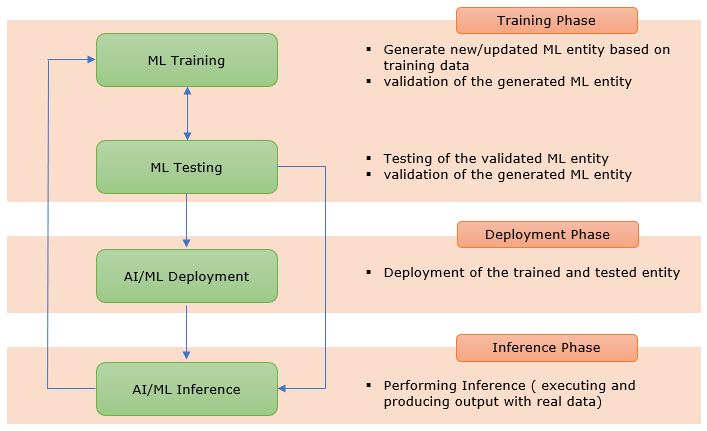

< 5G AI/ML workflow based on TR 28.908 V1.2.0 - 4.3 AI/ML workflow for 5GS >

As most of you already understand, in order to train a AI/ML system. You need to have clear answer to followings :

- What kind of data will be used ?

- How the data would be aquired ?

- In what type of format the data should be prepared ?

Answers to the first two questions are described in TR 28.908 (v1.2.0) - 5.1.1 Event data for ML training and I think the answer to the third question will be described in various TS. In short, according to TR 28.908, the data is called 'Event data' implying that those data are to indicates a certain type of events and collected by specific event handler.

Followings are some of the example of these events

< TR28.908 (v1.2.0) - Table 5.1.1.4-1: Examples of potential Metric Threshold Crossing events >

|

Event-source Function

|

Event Name

|

Event description

|

Input/Metric

|

MOI

|

Condition

|

Threshold

|

Monitoring Period

|

|

BTSs; NBs; eNBs; gNBs; BSC; RNC

|

High Call Drop Rate event

|

Call Drop Rate more than a configurable threshold

|

Call Drop Rate

|

Cell

|

greater than

|

2 %

|

15 minutes

|

|

BTSs; NBs; eNBs; gNBs; BSC; RNC

|

Low Availability KPIs event

|

Availability KPIs dropping below a configurable threshold

|

Availability

|

Cell

|

less than

|

99 %

|

30 minutes

|

|

BTSs; NBs; eNBs; gNBs; BSC; RNC

|

Low Retainability KPIs event

|

Retainability KPIs dropping below a configurable threshold

|

Retainability

|

Cell

|

less than

|

98 %

|

30 minutes

|

|

BTSs; NBs; eNBs; gNBs; BSC; RNC

|

High Traffic event

|

Traffic greater than a configurable threshold

|

Cell load / PRB Utilization

|

Cell

|

greater than

|

80 %

|

15 minutes

|

|

BTSs; NBs; eNBs; gNBs; BSC; RNC

|

Interference event

|

User has experienced interference

|

SINR

|

Cell

|

less than

|

X dB

|

15 minutes

|

|

BTSs; NBs; eNBs; gNBs; BSC; RNC

|

Serving cell

|

-

|

RSRP

|

Cell

|

greater than

|

Y dBm

|

15 minutes

|

Event - source Function : Network Components that collects the data

MOI : Managed Object Instance. A part of a network that can be managed independently. This could be a physical component, such as a cell in a cellular network, or a logical component, such as a service or a process.

< TR28.908 (v1.2.0) - Table 5.1.1.4-2: Examples of potential Object-Status Change events >

|

Event-source Function

|

Event Name

|

Event description

|

MOI

|

Affected unit / parameter

|

Change / value

|

|

BTSs; NBs; eNBs; gNBs; BSC; RNC; NMS

|

HW Upgrade event

|

System Module HW version upgraded

|

BTS

|

System Module, Radio Module, …

|

HW version

|

|

SW Upgrade event

|

System Software Upgraded

|

BTS

|

System Module, Radio Module, …

|

SW version

|

|

Capability Enablement event

|

A specific Capability Enabled on the MOI

|

BTS

|

Spectrum Sharing

|

Spectrum Range for affected RATs

|

|

New Sector Addition Event

|

A new Sector getting added to a Site

|

Cell

|

Capacity and Coverage

|

Number of Sectors / Cells

|

|

Network Management

|

Parameter change event

|

CM Parameter changes applied for specific network element

|

Cell

|

Configuration Parameter

|

Parameter value

|

|

Home status event

|

MOI (e.g. site) Re-homing

|

Site

|

BSC/RNC, OSS

|

New BSC/ RNC/ OSS

|

|

SON, Analytics function

|

New Site event

|

New Site Integrated

|

Site/ gNB

|

C-SON Functions

|

Optimization Parameters in C-SON

|

|

Predicted Congestion

|

Trigger for Load Balancing detected

|

Cell

|

C-SON LBO

|

Mobility Parameter changes

|

|

Frequent Handover Failures

|

Trigger for MRO detected

|

Cell

|

C-SON MRO

|

Handover Parameter Changes

|

|

PCI Conflict

|

PCI conflict detected

|

Cell

|

C-SON PCI

|

PCI Changes

|

|

PRACH Conflict

|

PRACH conflict detected

|

Cell

|

C-SON PRACH

|

PRACH related parameter Changes

|

|

NCR Change

|

New First tier neighbour getting added

|

Cell

|

C-SON ANR

|

NCR Changes

|

|

Frequency Layer Change

|

New Frequency Layer added onto a site

|

BTS

|

C-SON

|

Frequency Layer Addition

|

Following use cases are based on TR 38.817 (V17.0.0 (2022-04)). As you would notice, it seems that 3GPP is targetting mostly for Network side optimization for now.

The informations to be collected are as follows :

- From local node:

- UE mobility/trajectory prediction

- Current/Predicted Energy efficiency

- Current/Predicted resource status

- From the UE:

- UE location information (e.g., coordinates, serving cell ID, moving velocity) interpreted by gNB implementation when available

- UE measurement report (e.g., UE RSRP, RSRQ, SINR measurement, etc), including cell level and beam level UE measurements

- From neighbouring NG-RAN nodes:

- Current/Predicted energy efficiency

- Current/Predicted resource status

- Current energy state (e.g., active, high, low, inactive)

The informations to be collected are as follows :

- From the local node:

- Current and predicted own resource status

- UE trajectory prediction

- Current and predicted UE traffic

- Predicted resource status information of neighbouring NG-RAN node(s)

- From the UE:

- UE location information (e.g., coordinates, serving cell ID, moving velocity) interpreted by gNB implementation when available

- UE Mobility History Information

- UE measurement report (e.g., UE RSRP, RSRQ, SINR measurement, etc), including cell level and beam level UE measurements

- From neighbouring NG-RAN Nodes:

- Current and predicted resource status

- UE performance measurement at traffic offloaded neighbouring cell

The informations to be collected are as follows :

- From the UE:

- UE location information (e.g., coordinates, serving cell ID, moving velocity) interpreted by gNB implementation when available.

- Radio measurements related to serving cell and neighbouring cells associated with UE location information, e.g., RSRP, RSRQ, SINR.

- UE Mobility History Information.

- From the neighbouring RAN nodes:

- UE’s history information from neighbour

- Position, QoS parameters and the performance information of historical HO-ed UE (e.g., loss rate, delay, etc.)

- Current/predicted resource status

- UE handovers in the past that were successful and unsuccessful, including too-early, too-late, or handover to wrong (sub-optimal) cell, based on existing SON/RLF report mechanism.

- From the local node:

- UE trajectory prediction

- Current/predicted resource status

- Current/predicted UE traffic

The Release 18 RAN1 study on AI/ML for NR Air Interface explores the benefits of augmenting the air interface with features enabling improved support of AI/ML based algorithms for enhanced performance and/or reduced complexity or overhead.

Following is based on R1-2204060. Refer to the original document for further details.

- CSI feedback enhancement : Enhancements to CSI, such as frequency domain compression, have been agreed upon, with other enhancements like time-domain prediction still under consideration (e.g., overhead reduction, improved accuracy, prediction)

- Beam management : Use AI and ML techniques to predict the best beam to use for a given user device at a given time and place, accounting for various factors such as user location, movement, and network conditions (i.e, beam prediction in time, and/or spatial domain for overhead and latency reduction, beam selection accuracy improvement)

- Positioning accuracy enhancements for different scenarios including : Compared to traditional positioning algorithms, machine learning-assisted positioning can require less additional feedback overhead, and positioning estimates can be made directly inside the radio access network (RAN) and handle the situation with heavy NLOS conditions .

NOTE : I am writing a few separte notes about AI/ML on PHY and Air Interface.

RP-242131 - Views on the study part of AIML for NR air interface - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- Proposal 1: CSI prediction is moved to normative work.

- Proposal 2: Continue the study of CSI compression till the end of Rel-19.

- Proposal 3: Continue the study of model identification & model transfer/delivery under the context of two-sided model till the end of Rel-19.

- Proposal 4: RAN plenary to further discuss whether to specify CN/OAM/OTT collection of UE-sided model training data.

- Proposal 5: If supported and specified, Option 3 (OAM) is preferred for collection of UE-sided model training data.

- Proposal 6: Continue the study on the testability and interoperability of two-sided model in RAN4 till the end of Rel-19.

Even before 3GPP specification process is done, some modem chipset starts coming out equipped with AI functionalities in radio stack.

- AI-Enhanced Signal Boost (Adaptive antenna tuning solution)

- AI-based channel-state feedback and dynamic optimization

- AI-based mmWave beam management for superior mobility and coverage robustness

- AI-based network selection for superior mobility and link robustness

- AI-based adaptive antenna tuning for up to 30% improved context detection for higher average speeds and coverage

- 3GPP TR/TS/General

- 3GPP TDocs

- R1-2401766 : Session notes for 9.1 (Artificial Intelligence (AI)/Machine Learning (ML) for NR Air Interface) - TSG RAN WG1 #116 – February 26th – March 1st, 2024

- R1-2401599 - FL summary #3 for AI/ML in management : 3GPP TSG RAN WG1 #116 - February 26th – March 1st, 2024

- R1-2401598 : FL summary #1 for AI/ML in management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401597 : FL summary #1 for AI/ML in management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401596 : FL summary #1 for AI/ML in management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401573 : Final Summary for other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401572 : Summary #4 for other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401571 : Summary #3 for other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401570 : Summary #2 for other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401569 : Summary #2 for other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401563 : Final summary for Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401562 : Summary#6 for Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401561 : Summary#5 for Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401560 : Summary#4 for Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401559 : Summary#3 for Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401558 : Summary#2 for Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401557 : Summary#1 for Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401432 : Specification support for AI-ML-based positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401431 : Specification support for AI-ML-based beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401430 : Work plan for Rel-19 WI on AI and ML for NR air interface - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401225 : Discussion on other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401224 : Discussion on AI/ML for CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401179 : Discussion on support for AI/ML beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401155 : Discussion on Additional Study of AI/ML for CSI Compression- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401154 : Discussion on Specification Support of AI/ML for Positioning Accuracy Enhancement- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401153 : Discussion on Specification Support of AI/ML for Beam Management- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401152 : Discussion on study of AIML for CSI compression- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401152 : Discussion on study of AIML for CSI compression- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401151 : Discussion on study of AIML for CSI prediction- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401138 : View on AI/ML model and data- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401137 : Design for AI/ML based positioning- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401135 : Discussions on AI/ML for CSI feedback- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401134 : Discussions on AI/ML for beam management- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401111 : Discussion on other aspects of AI/ML model and data- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401110 : Discussion on the AI/ML for CSI compression- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401109 : Discussion on the AI/ML for CSI prediction- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401107 : Discussion on AI/ML for beam management- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401043 : Discussion on AI/ML based beam management- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401042 : Discussion on support for AIML positioning- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401038 : Discussion on other aspects for AI/ML for air interface- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401037 : Discussion on AI/ML for CSI compression- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401036 : Discussion on AI/ML for CSI prediction- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401006 : Discussion on other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401005 : Discussion on CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401004 : Discussion on AI based CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2401002 : Discussion on AI/ML beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400916 : Study on CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400915 : Study on CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400914 : Discussions on AI/ML for beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400910 : Discussion on other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400909 : Discussion on AI/ML-based CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400908 : Discussion on AI/ML-based CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400847 : Discussion on CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400846 : Discussions on CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400845 : Discussions on AI/ML for positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400844 : Discussions on AI/ML for beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400842 : Discussion on AI/ML for CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400834 : On aspects of AI/ML model and data framework - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400833 : On AI/ML for CSI Compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400832 : On AI/ML for CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400831 : AI/ML specification support for beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400797 : Other aspects of AI/ML Model and Data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400796 : AI/ML for CSI Compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400795 : AI/ML for CSI Prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400794 : AI/ML for Positioning Accuracy Enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400793 : AI/ML for Beam Management- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400781 : Discussion on specification support for AI/ML-based beam management- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400780 : Discussion on other aspects of AI/ML model and data- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400770 : Discussion on other aspects of AI/ML model and data- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400769 : Discussion on CSI compression with AI/ML- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400768 : Discussion on CSI prediction with AI/ML- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400767 : Discussions on specification support for AI/ML positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400766 : Discussion on specification support on AI/ML for beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400758 : On other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400757 : Discussion on AI/ML-based positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400724 : Discussion for further study on other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400723 : Discussion for further study on AI/ML-based CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400722 : Discussion for further study on AI/ML-based CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400721 : Discussion for supporting AI/ML based positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400720 : Discussion for supporting AI/ML based beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400696 : Additional study on other aspects of AI model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400695 : Additional study on AI-enabled CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400694 : Additional study on AI-enabled CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400693 : Specification support for AI-enabled positioning - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400692 : Specification support for AI-enabled beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400653 : Discussion on CSI compression for AI/ML - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400621 : Additional study on AI/ML-based CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400620 : Additional study on AI/ML-based CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400619 : On specification for AI/ML-based positioning accuracy enhancements - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400618 : On specification for AI/ML-based beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400546 : Discussion on two-sided AI/ML model based CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400545 : Discussion on one side AI/ML model based CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400544 : Discussion on AI/ML-based positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400466 : Discussion on other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400465 : Discussion on specification support for beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400464: Discussion on CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400463: Discussion on CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400422: Study on other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400421: Study on AI/ML-based CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400420: Study on AI/ML-based CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400419: Discussion on AI/ML-based positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400418: Discussion on AI/ML-based beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400396: ML Model and Data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400395: ML based CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400394: ML based CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400393: ML based Positioning - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400392: ML based Beam Management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400380: Other study aspects of AI/ML for air interface - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400378: AI/ML for CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400377: Specification support for AI/ML for positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400376: Specification support for AI/ML for beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400320: Discussion on other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400319: Discussion on AI/ML for CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400318: Discussion on AI/ML for CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400274: Specification support for AI/ML beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400266: Discussion on study for other aspects of AI/ML model and data- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400264: Discussion on study for AI/ML CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400263: Discussion on specification support for AI/ML beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400236: Other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400235: Discussion on CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400234: Discussion on CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400172: Discussion on other aspects of AI/ML - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400171: AI/ML for beam management - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400169: Discussion on specification support for AI/ML positioning accuracy enhancement - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400166: AI/ML for CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400165: AI/ML for CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400150: Discussion on CSI compression for AI/ML - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400147: Discussion on other aspects of the additional study for AI/ML - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400146: Discussion on CSI prediction for AI/ML - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400145: Discussion on positioning accuracy enhancement for AI/ML - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400144: Discussion on beam management for AI/ML - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400095: Discussion on potential performance enhancements/overhead reduction with AI/ML-based CSI feedback compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400094: Discussion on other aspects of AI/ML model and data on AI/ML for NR air-interface - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400048: Discussion on other aspects of AI/ML model and data - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400047: Discussion on AIML for CSI compression - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400046: Discussion on AIML for CSI prediction - 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- R1-2400045: Discussion on AIML for beam management- 3GPP TSG RAN WG1 #116 February 26th – March 1st, 2024

- 3GPP TSG RAN Meeting #105

- RP-242131 : Views on the study part of AIML for NR air interface - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-242099 : Discussion on Rel 19 AI/ML for Air Interface - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-242095 : Discussion on RAN4 scope for AI mobility - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-242094 : DRAFT LS on RAN study on AI/ML for NR air interface - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-242030 : Checkpoint for AI/ML PHY : Checkpoint for AI/ML PHY- 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241991 : Discussions on the study objectives of AI/ML for NR Air Interface- 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241975 : On the checkpoint of Rel-19 AI/ML based CSI prediction - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP- 241969 : Discussion on AI/ML for air interface for R19 - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241955 : AI/ML Logistical Issues - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241908 : AI/ML UE Sided data collection – September 2024 checkpoint - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241902 : On the checkpoints for Rel-19 AI/ML for NR Air Interface - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241862 : Views on AI/ML based CSI compression in Rel 19 - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP 241859 : Views on Rel 19 AI/ML for NR air interface - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241818 : Discussion on RAN4 scope for Rel 19 AI/ML for mobility

- RP-241798 : Discussion on AI/ML for air interface WI - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241769 : New WID on Enhancements for Artificial Intelligence (AI)/Machine Learning (ML) for NG-RAN - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241768 : FS_NR_AIML_NGRAN_enh - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241759 : R19 AI/ML for NR air interface study objective checkpoint discussion - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP- 241740 : WID Revision on AI/ML for NR Air Interface - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- RP-241717 : LS in R3-243969 on LS on terminology definitions for AI-ML in NG-RAN from RAN3 - 3GPP TSG RAN Meeting #105 - September 9-12, 2024

- 3GPP TSG-RAN WG1 Meeting #118bis

- R1-2407649 : AI/ML for Positioning Accuracy Enhancement - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407653 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407654 : Discussion on AI/ML for positioning accuracy enhancement- 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407655 : Discussion on AI/ML for CSI prediction - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407656 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407657 : Discussion on other aspects of the additional study for AI/ML -3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407694 : Discussion on AIML for beam management -3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407695 : Discussion on AIML for CSI prediction -3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407697 : Discussion on other aspects of AI/ML model and data-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407728 : Discussion on AI/ML for beam management -3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407852 : Other aspects of AI/ML model and data -3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407894 : Discussion on AI/ML for CSI prediction-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407895 : Discussion on AI/ML for CSI compression-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407896 : Discussion on other aspects of AI/ML model and data-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407938 : Discussion on AI/ML beam management-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407939 : Discussion on AI/ML CSI prediction-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407940 : Discussion on AI/ML CSI compression-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407941 : Discussion on other aspects of AI/ML in NR air interface-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407951 : Discussion on AI/ML-based positioning accuracy enhancement-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407952 : Discussion on UE-side AI/ML model based CSI prediction-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407953 : Views on two-sided AI/ML model based CSI compression-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407954 : Further study on AI/ML model and data-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407988 : ML based Beam Management-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407989 : ML based Positioning-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407990 : ML based CSI prediction-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407991 : ML based CSI compression-3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2407992 : ML Model and Data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408027 : Discussion on AI/ML-based beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408028 : Discussion on AI/ML-based positioning - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408029 : Discussion on AI/ML-based CSI prediction - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408030 : Study on AI/ML-based CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408031 : Study on AI/ML for other aspects - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408158 : On specification for AI/ML-based beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408162 : Additional study on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408222 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408268 : AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408269 : Discussion on other aspects of AI/ML - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408279 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408292 : Other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408363 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408390 : Specification support for AI-enabled beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408394 : Additional study on other aspects of AI model and data(*)- 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408401 : Discussions on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408428 : AI/ML specification support for beam management(*)- 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408432 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408438: On other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408452: Discussion on AI/ML beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408453: Discussion on specification support for AI/ML-based positioning - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408454: Discussion on AI-based CSI prediction - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408455: Discussion on AI-based CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408456: Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408522: Discussion on support for AI/ML positioning - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408541: Discussion on other aspects of AI/ML for air interface - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408544: AI/ML for Beam Management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408545: AI/ML for Positioning Accuracy Enhancement - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408546: AI/ML for CSI Prediction - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408547: AI/ML for CSI Compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408548: Other aspects of AI/ML for two-sided model use case - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408549: Draft LS reply on applicable functionality reporting for AI/ML beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408560: Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408609: Discussion on specification support for AI/ML-based positioning accuracy enhancements - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408806: Discussions on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408807 : Discussions on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408837 : Specification support for AI-ML-based beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408838 : Specification support for AI-ML-based positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408841 : Other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408885 : Other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408903 : Design for AI/ML based positioning - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408908 : Discussions on specification support for positioning accuracy enhancement for AI/ML - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408923 : Discussion on specification support for AI/ML Positioning Accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408924 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408952 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408957 : Remaining issues on Rel-18 UE Features - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2408959 : Specification support for AI/ML beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409007 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409114 : FL summary #0 for AI/ML in beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409115 : FL summary #1 for AI/ML in beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409116 : FL summary #2 for AI/ML in beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409117 : FL summary #3 for AI/ML in beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409118 : FL summary #4 for AI/ML in beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409156 : Summary#1 of Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409157 : Summary#2 of Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409158 : Summary#3 of Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409159 : Summary#4 of Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409160 : Summary#5 of Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409161 : Final summary of Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409168 : Summary #1 for other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409169 : Summary #2 for other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409170 : Summary #3 for other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409171 : Summary #4 for other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409195 : Updated summary of Evaluation Results for AI/ML CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409278 : Summary#3 of AI 9.5.3 for R19 NES - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409285 : Summary#6 of Additional study on AI/ML for NR air interface: CSI compression - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- R1-2409305 : FL summary #5 for AI/ML in beam management - 3GPP TSG-RAN WG1 Meeting #118bis - October 14th – 18th, 2024

- 3GPP TSG-RAN WG1 Meeting #120

- R1-2500015 : Reply LS to SA5 on AIML data collection - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500016 : Reply LS to SA2 on AIML data collection - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500029 : Reply on AIML data collection - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500030 : Reply LS on AIML data collection - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500031 : Reply LS on AIML data collection - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500050 : Discussion on specification support for AI/ML-based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500051 : Discussion of CSI compression on AI/ML for NR air interface - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500052 : Discussion on other aspects of AI/ML model and data on AI/ML for NR air-interface - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500057 : AI/ML for CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500058 : AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500060 : AI/ML for Positioning Accuracy Enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500066 : Discussion on AI/ML-based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500067 : Discussion on AI/ML-based positioning enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500068 : Discussion on specification support for AI CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500069 : Discussion on study for AI/ML CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500070 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500089 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500090 : Discussion on AI/ML for positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500091 : Discussion on AI/ML for CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500152 : Discussion on other aspects of the additional study for AI/ML - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500159 : Discussion on AIML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500160 : Discussion on AIML for CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500161 : Discussion on AIML for CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500162 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500201 : Discussion on AI/ML-based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500202 : Discussion on AI/ML-based positioning - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500203 : Discussion on AI/ML-based CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500204 : Further study on AI/ML-based CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500205 : Further study on AI/ML for other aspects - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500254 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500255 : Discussion on other aspects of AI ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500276 : Discussion on AI/ML for CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500277 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500278 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500341 : Other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500388 : LS on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500389 : LS on LMF-based AI/ML Positioning for case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500390 : Specification Support for AI/ML for Beam Management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500391 : AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500392 : Discussion on other aspects of AI/ML - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500407 : Discussion on AI/ML for CSI Compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500408 : Other aspects of AI/ML Model and Data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500465 : On specification for AI/ML-based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500466 : On specification for AI/ML-based positioning accuracy enhancements - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500467 : On specification for AI/ML-based CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500468 : Additional study on AI/ML-based CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500469 : Additional study on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500512 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500518 : Discussion on specification support for AI-ML based positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500529 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500533 : On AI/ML-based CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500534 : On AI/ML-based CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500545 : AI/ML based Beam Management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500546 : AI/ML based Positioning - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500547 : AI/ML based CSI Prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500548 : AI/ML based CSI Compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500549 : AI/ML Model and Data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500555 : Discussion on AIML beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500556 : Discussion on AIML positioning - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500557 : Discussion on AIML CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500558 : Discussion on AIML CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500559 : Discussions on other aspects of AlML In NR air interface - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500565 : Discussions on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500568 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500571 : Discussion on reply LS on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500572 : Draft reply LS on LMF-based AI/ML Positioning for Case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500591 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500606 : Discussion on specification support for AIML based positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500616 : Discussion on LS on LMF-based AI/ML Positioning for case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500617 : Discussion on LS on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500618 : [Draft] Reply LS on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500619 : [Draft] Reply LS on LMF-based AI/ML Positioning for Case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500635 : AI/ML specification support for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500636 : Specification impacts for AI/ML positioning - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500638 : On AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500639 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500643 : Specification support for AI/ML for positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500644 : Specification Support for AI/ML CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500686 : Specification support for AI-enabled beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500687 : Specification support for AI-enabled positioning - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500688 : Specification support for AI-enabled CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500689 : Addtional study on AI-enabled CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500690 : Additional study on other aspects of AI model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500695 : Draft reply LS on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500696 : Draft reply LS on LMF-based AI/ML Positioning for Case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500708 : Discussion for SA2 LS on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500710 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500711 : Discussion on AI/ML-based positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500712 : Discussion on AI/ML model based CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500713 : Views on two-side AI/ML model based CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500714 : Further study on AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500745 : Discussion for SA2 LS on LMF-based AI/ML Positioning for Case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500746 : Discussion on support for AIML positioning - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500766 : Enhancements for AI/ML enabled beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500767 : Discussion on Specification Support for AI/ML-based positioning - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500768 : Discussion on AI/ML based CSI prediciton - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500769 : Discussion on AI based CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500770 : Discussion on other aspects of AI/ML models and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500816 : Discussion on AI/ML-based CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500817 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500834 : Discussion for supporting AI/ML based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500835 : Discussion for supporting AI/ML based positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500836 : Views on AI/ML based CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500837 : Views on additional study for AI/ML based CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500838 : Views on additional study for other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500903 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500904 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500925 : Discussion on specification support on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500926 : Discussion on specification support for AIML-based positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500928 : Discussion on CSI compression with AI/ML - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500929 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500962 : Discussion on specification support for AI/ML beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500970 : AI/ML for Beam Management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500971 : AI/ML for Positioning Accuracy Enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500972 : AI/ML for CSI Prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500973 : AI/ML for CSI Compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500974 : Other aspects of AI/ML for two-sided model - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500976 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2500991 : AI/ML positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501013 : Discussion on specification support for AIML-based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501014 : AI/ML - Specification support for CSI Prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501015 : Additional study on AI/ML for NR air interface - CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501079 : Other Aspects of AI/ML framework - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501080 : Discussion on AI/ML for CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501085 : AI/ML for Beam Management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501104 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501119 : AI/ML for CSI feedback enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501131 : Discussion on specification support for AI/ML based positioning accuracy enhancements - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501132 : Discussion on specification support for AI/ML based CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501143 : Rapporteur view on higher layer signalling of Rel-19 AI-ML for NR air interface - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501144 : Specification support for AI-ML-based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501145 : Specification support for AI-ML-based positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501148 : Other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501190 : Discussion on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501191 : Discussion on AI/ML for positioning accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501192 : Discussion on AI/ML for CSI prediction - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501193 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501194 : Discussion on other aspects of AI/ML model and data - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501226 : Discussion on AI/ML based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501235 : Discussion on AIML based beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501245 : Remaining issues for UE-initiated beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501247 : Discussion on Specification Support of AI/ML for Positioning Accuracy Enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501259 : Design for AI/ML based positioning - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501262 : Discussions on AI/ML for beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501272 : Remaining issues on CSI enhancements for large antenna arrays and CJT - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501273 : Discussion on specification support for AI/ML Positioning Accuracy enhancement - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501274 : Discussion on AI/ML for CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501283 : Discussions on specification support for positioning accuracy enhancement for AI/ML - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501302 : Discussion for SA2 LS on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501303 : Discussion for SA2 LS on LMF-based AI/ML Positioning for Case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501304 : Draft reply LS on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501305 : Draft reply LS on LMF-based AI/ML Positioning for Case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501306 : Draft LS reply on LMF-based AI/ML Positioning for Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501308 : Draft LS reply on LMF-based AI/ML Positioning for Case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501330 : Discussion on the LS reply to SA2 on AI/ML Positioning of Case 2b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501331 : Discussion on the LS reply to SA2 on AI/ML Positioning of Case 3b - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501333 : Specification support for AI/ML beam management - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501350 : Discussion on two-sided AI/ML model based CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- R1-2501354 : Discussion on two-sided AI/ML model based CSI compression - 3GPP TSG-RAN WG1 Meeting #120 - February 12th–16th, 2025

- General Reading

YouTube

YouTube (Korean)

|

|